Bespoke Commerce at Scale: Announcing the Crystallize MCP Server and the Future of Agentic AI

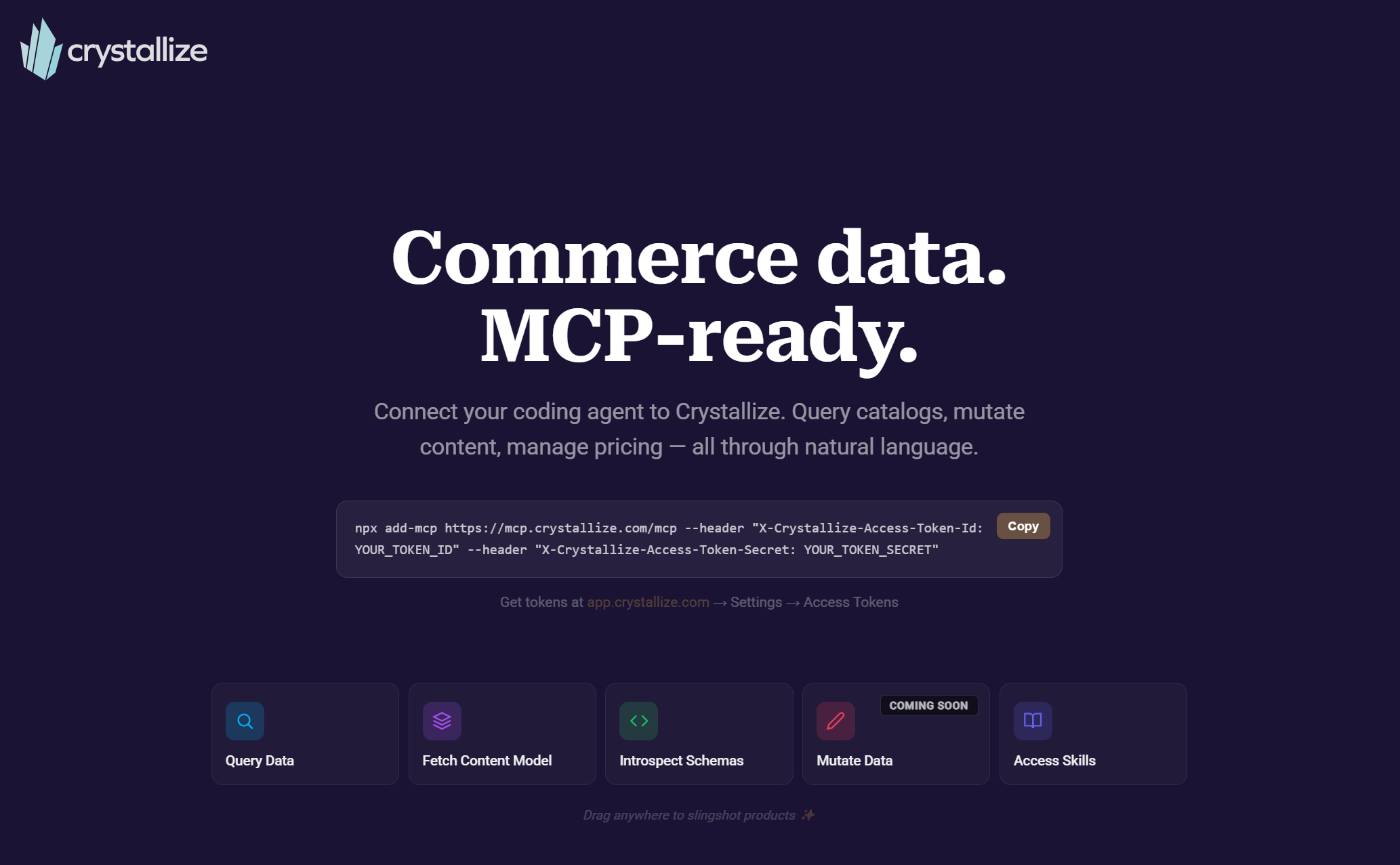

Connect your AI coding agent to Crystallize in minutes and let it inspect your schema, generate correct GraphQL, and query your tenant—all from your existing workflow.

AI in commerce is only as useful as the data it can understand and act on. The Model Context Protocol (MCP) solves the connection problem; Crystallize solves the structure problem. Together, they turn AI from a chatbot into something closer to an operator: schema-aware, context-grounded, and capable of working directly with real commerce systems.

If you’re building with AI today, the real question isn’t “Which model?” It’s: Can your platform expose capabilities and data in a way AI can reliably use? We are happy to share that Crystallize can.

We officially released our Model Context Protocol (MCP) server, bridging the gap between raw AI reasoning and complex headless commerce data. It allows Large Language Models (LLMs) to navigate Crystallize schemas, query product catalogs, and apply architectural best practices directly within a developer’s native environment, such as Cursor, VS Code, Codex, Claude Code, or a non-developer application like Claude Desktop.

What MCP Actually Changes (and What It Doesn’t)?

Model Context Protocol (MCP) is often described as a “USB-C for AI.” That’s directionally right: one interface instead of many. But the real value is more specific:

- It standardizes how AI agents discover and use tools.

- It exposes capabilities as structured, callable actions.

- It reduces brittle, one-off integrations between models and systems.

- It creates a layer where governance can live: authentication, scoping, rate limiting, and audit. All that without burdening every client.

What it doesn’t do is magically make your system usable by AI. If your underlying data is messy, inconsistent, or poorly modeled, MCP just exposes that faster.

That’s where most platforms fall apart.

Why is Crystallize Structurally Different?

Most commerce stacks weren’t designed for machine interaction. Data is scattered across services, loosely typed, and dependent on human interpretation. Crystallize takes a different approach:

- Semantic Schema-first modeling: products, variants, pricing, and content are explicitly structured.

- Unified APIs: Catalog, Shop, Discovery, and Core are consistent and queryable.

- Product + content + commerce together: no stitching across PIM + CMS + commerce layers.

- Shape-based architecture: predictable data patterns AI can introspect.

When you connect MCP to Crystallize, you’re not just exposing endpoints. You’re exposing a coherent system with all the semantics of your own business. That’s what makes AI usable, not just connected.

From API Access to “Intelligence Wrapper”

The Crystallize MCP server is a remote, cloud-hosted implementation using the HTTP streaming transport. It provides an intelligence wrapper around the platform's core APIs, enabling agents to act as expert consultants rather than just data fetchers.

The server currently exposes several critical tools:

- Knowledge Query Tool (exposing the Skills): A direct line to Crystallize’s documentation, data modeling best practices, and years of expert knowledge from live streams. This is the grounding layer, the thing that turns a generic LLM into a domain-aware assistant.

- API Wrappers: Specialized tools for the Catalog, Discovery, Shop, and Core APIs. These allow an agent to introspect a tenant's unique schema and run complex GraphQL queries without the developer needing to write a single line of syntax. The server provides query correction and optimization that makes it more than "just" a wrapper.

- Read-Only Safety: To ensure data integrity during early adoption, the current implementation is strictly read-only; all mutations are blocked at the server level, though agents can still generate mutation code for human review and execution.

Concretely, you can fetch the tenant’s content model (Shapes), introspect compacted GraphQL schemas for multiple Crystallize APIs, run GraphQL queries against Catalog, Discovery, Core (Core Next), and Shop Cart APIs, and help build/validate mass operation files.

One of the most important design decisions here is the Skills-first workflow. Instead of letting the agent jump straight into queries, it starts by grounding itself in data modeling patterns, query best practices, system-specific constraints, etc. That changes the nature of AI interaction from probabilistic to guided, and from generic to domain-aware.

If you wanna know more, have ideas, or want to contribute, the Crystallize AI Repository is open-source.

Note: Can you find the Easter 🥚egg on this page? Happy 🐰Easter!

What You Can Actually Do Today: Best Use Cases

The current MCP implementation is intentionally read-only. That’s not a limitation; it’s sequencing. The best initial use cases are the ones where MCP eliminates friction but doesn’t require risky automation:

- Schema-aware onboarding: ask an AI assistant to inspect your Shapes and generate correct GraphQL query patterns against your tenant, not a generic example. It can generate a valid Mass Operation file based on the description of your need!

- Commerce diagnostics and support: query product, pricing, cart, or content structures conversationally to answer “why is this not showing?” or “what changed?” without manually traversing multiple tools.

- Reusable automation foundations: build scripts and internal tools that let your team inject prompts + tool calls into workflows (for example, sales/marketing ops that need a precise slice of commerce data). This “same prompt, three ways”—coding agent, chat UI, and script—is demonstrated directly in Crystallize’s MCP walkthrough content.

Let’s put these in perspective of real-life scenarios. Imagine a developer prompts their agent: "Find all furniture items in abandoned carts from the last 24 hours. Check if any are currently discounted. If so, draft a personalized email." The agent uses the Core API Tool to find carts, the Discovery API to check product shapes, and the Skills tool to draft the email according to best practices—all in approximately 63 seconds.

Another example is a developer uploading a messy CSV of 500 products. The agent analyzes the data, queries the Skills tool for the best "Product Shape" patterns (such as SEO pieces and classification bridges), and automatically generates the GraphQL mutations needed to build a scalable architecture in Crystallize.

Our MCP Livestream digs deeper into this. Check it out.

CLI versus MCP: the transport question

Crystallize has a CLI, and we'll continue to expose capabilities there. So why invest in a dedicated MCP server? Because this isn't a CLI vs. MCP debate—it's a transport question.

MCP defines two main transports, STDIO and HTTP. SSE is being replaced by HTTP streaming.

The stdio transport spawns a local process on the developer's machine: the AI client pipes JSON in, gets JSON back. This is essentially what a CLI does. In fact, wrapping a CLI behind MCP stdio is a common pattern; it’s actually the first pattern, as HTTP transport came later in the protocol. And one we could use for the Crystallize CLI, too.

But stdio has structural limits for a multi-tenant platform. Every user runs their own instance. There's no centralized way to enforce authentication, scope tenant access, apply rate limiting, or audit usage across a team. Governance lives in local config files.

The Crystallize MCP server uses HTTP streaming transport instead. It's remote, cloud-hosted, and sits behind infrastructure you can manage. It can be proxied, governed, versioned, and secured at the platform level. One deployment serves every client—Cursor, Claude Desktop, a Vercel AI SDK script, or a custom agent. Authentication and tenant scoping happen server-side.

The CLI remains the right tool for direct, deterministic execution—and we'll be expanding its capabilities. The HTTP MCP server is the intelligence layer that any AI client can consume, with the governance that a multi-tenant platform requires. They're complementary: the CLI for when you know what you want, MCP for when the agent needs to reason about it first.

Business Strategic Value

The business story of MCP is leverage: instead of investing in a single AI interface that users must adopt, MCP lets Crystallize capabilities appear within whichever AI client a team already prefers.

From a business lens, that translates into measurable advantages:

- Shorter time-to-first-value: teams can go from “we have a tenant” to “we can query it correctly and build against it” faster, because MCP enables immediate schema introspection plus query execution in the same conversational loop.

- Lower enablement and support cost: when Skills and schema access are available through MCP, answers move closer to the point of work. The AI can retrieve best-practice guidance and concrete query patterns without the user having to leave their environment.

- Architectural debt insurance: most technical debt stems from "lazy modeling" (e.g., using generic text fields for everything). By using an AI trained on battle-tested design patterns, businesses ensure their foundation is "Bespoke, Not Basic," capable of scaling to multiple markets and languages from day one.

- Higher partner velocity: agencies and integrators can prototype and validate faster—particularly when MCP is paired with ⚡Flare AI style capabilities (mass operations, scaffolding, storefront generation). This is consistent with Flare’s “time to first success” positioning, which the manifesto argues is crucial to avoiding the momentum-killing “empty tenant” phase.

- Pluggable agentic workflows: The MCP server integrates cleanly with SDKs like the Vercel AI SDK, making it straightforward to add Crystallize capabilities to any script or application. The HTTP transport means the same server that powers your AI coding agent can power your internal ops tooling.

What Is Missing Right Now?

Three gaps matter most, and they map naturally to a “next iteration” story that includes ⚡Flare AI (more on that later):

- Read-write parity. Crystallize’s MCP is read-only today. That's deliberate for safe adoption while teams validate workflows. The next major value step is controlled write support: creating/updating items, managing orders/checkout flows, and evolving content structures via governed tool calls. The HTTP transport makes this safer than stdio would—write access can be scoped, rate-limited, and audited at the server level.

- Client surfacing beyond tools. The protocol can expose tools, resources, and prompts, but the livestream notes that, in practice, tools are what most clients handle well today—resources/prompts can be inconsistently surfaced depending on the AI client. This affects how “Skills,” docs, and reusable prompt patterns show up across environments.

- Governance and reuse. A core internal critique is that Flare-style intelligence should not be trapped in a single UI; it should live as reusable MCP tools/Skills so it can be integrated “anywhere.” Positioning MCP as the developer product and Flare as the UI layer is the proposed correction.

These aren’t missing pieces. They’re the next layer.

Critical Analysis: The Flare-First Friction

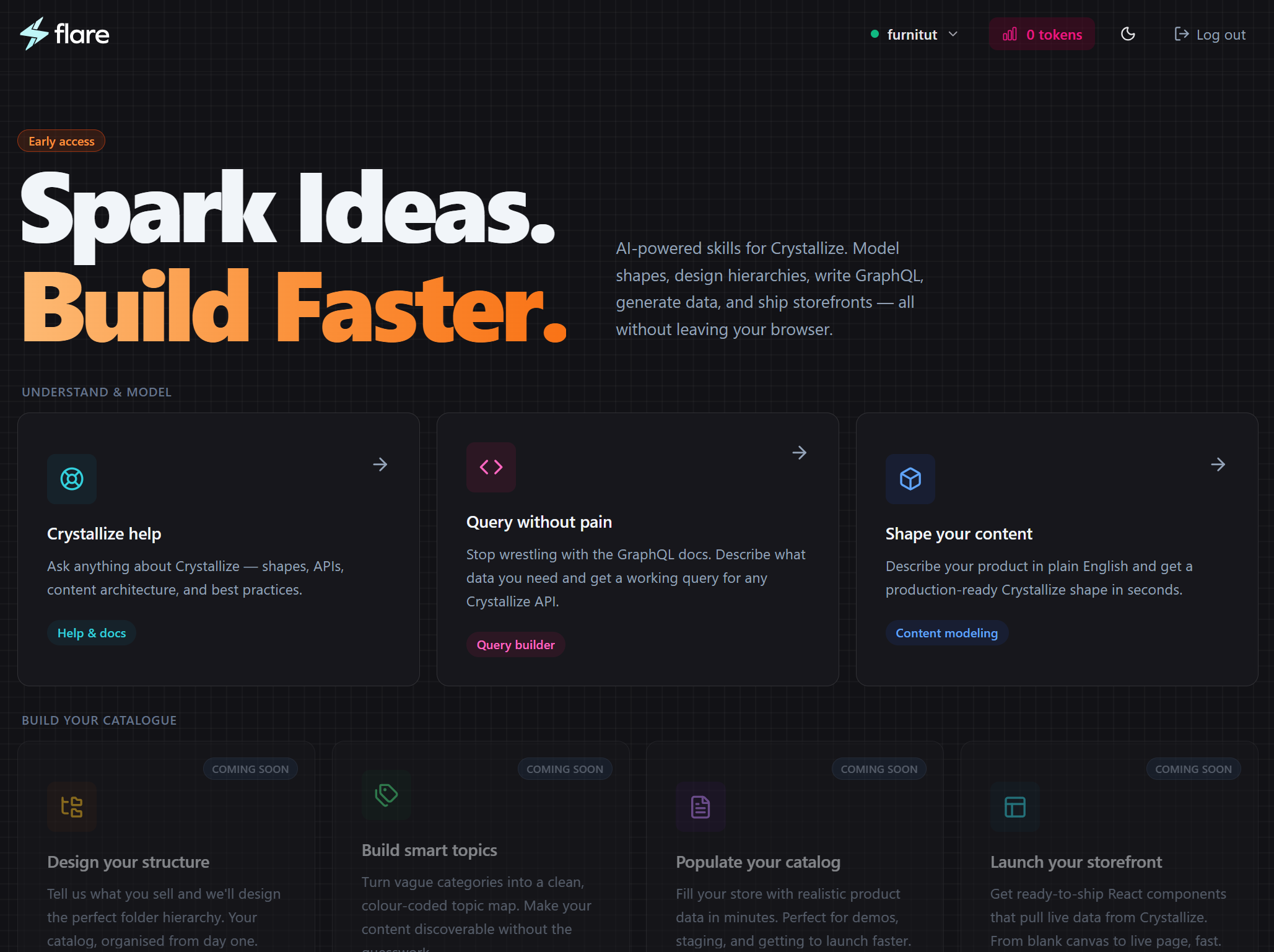

Let's be honest about sequencing. We developed ⚡Flare AI (a high-level chatbot interface) before exposing the core logic via a standardized MCP server (and Skills). From a pure developer-experience standpoint, this was arguably the wrong order because developers prefer to stay in their own environments (Cursor, VS Code) rather than switching to a separate UI.

But ⚡Flare AI validated the workflows before we productized them. We learned what questions developers actually ask, which Skills matter most, where agents get confused, and how schema introspection needs to work in practice. Building the friendly UI first was like writing the tests before the library.

⚡Flare AI showed what's possible. MCP makes it reusable everywhere. What started as an interface is becoming infrastructure.

Crystallize ⚡Flare AI: Prototyping at Warp Speed

While still in development, we can’t go without explaining⚡Flare AI, already mentioned here more than once. ⚡Flare AI remains the most accessible entry point for teams who want to see what AI-assisted commerce looks like without configuring an MCP client. Built on Next.js 16 and powered by Claude, it functions as a "Project in a Box". Current Capabilities include :

- AI Information Architecture: Consultative interviews that build scalable folder trees for multi-market businesses.

- AI Taxonomy: Creation of deep, hierarchical topic maps with smart, visual color-coding.

- AI Data Creation: Populating tenants with realistic test data and professional images via Pexels integration.

- Storefront Generation: Generating custom React/Next.js components tailored to the tenant's specific data shapes.

The future of Flare lies in "Continuous Re-Flaring"—the ability to launch new product lines or markets months after initial setup without breaking existing code. It will evolve into a proactive "Agentic Management" layer that suggests performance and merchandising optimizations based on live data.

The Future of Agentic Commerce

If you’re evaluating AI in commerce, the key question is not which model to use, but whether the platform backing your business is structured enough for AI to act on. MCP exposes that gap immediately. Connect an agent, inspect your schema, and you’ll see whether your commerce stack is AI-ready or just AI-adjacent.

Commerce is moving from manual setup, static configurations, and tool-switching workflows to orchestrated systems, AI-assisted operations, and continuous optimization.

MCP is the connective layer. Crystallize is the structured foundation underneath it.

Ready for a commerce technology that adapts to the business vision—not the other way around? Connect your agent to the Crystallize MCP server today and start building your first bespoke commerce engine.

Crystallize MCP Server: https://mcp.crystallize.com/

Crystallize⚡Flare AI: https://flare.crystallize.com/

Productivity tools for Crystallize builders, powered by AI: https://crystallizeapi.github.io/ai/

AI at Crystallize YouTube series: https://www.youtube.com/playlist?list=PLiKqsi6CxPy7FbZEEUAz-GG-zenVK4FIv