Where To Host Your eCommerce Frontend?

You’ve spent countless hours developing a fantastic headless eCommerce frontend. Now it’s time to make it publicly available. Where should you host your store frontend?

In a headless commerce architecture, your frontend is decoupled from the backend, giving you the freedom (and responsibility) to choose where and how to host it. Choosing the right hosting platform can determine how fast your site loads, how well it scales under peak traffic, and ultimately how successful your online store will be in terms of performance, SEO, and conversion rates.

In fact, improvements in frontend speed have been shown to directly boost eCommerce conversions – for example, one retailer saw a 72% increase in conversion rate after moving from a monolithic setup to a headless architecture with a Next.js frontend hosted on Vercel (source).

The stakes are high: a slow or unreliable storefront can frustrate customers and hurt sales, while a fast, globally-available site can delight users and drive revenue.

So, where are you going to host your eCommerce frontend?

Why Frontend Hosting Matters in Headless Commerce?

In a traditional monolithic eCommerce platform, hosting is often handled for you, but in a headless setup, frontend hosting becomes a critical piece of your architecture. Headless commerce splits your storefront (the frontend) from the backend commerce stuff. This means you're free to pick modern frameworks and hosting without the backend holding you back. It's awesome for flexibility, but there's a catch: you can totally supercharge performance and UX with the latest hosting tech, but you have to choose wisely because it'll directly affect how fast, reliable, and scalable your site is.

Performance is paramount in e-commerce. Simple reason for it. Faster sites engage more users and convert better, because shoppers won’t wait around for slow pages. Hosting influences how quickly your content reaches users (e.g., CDN distribution, server response times). A well-chosen hosting solution can ensure your product pages and checkout load quickly globally, boosting SEO and conversions.

Conversely, a poor choice might lead to latency, downtime, or scaling issues during a big sale. Plus, headless setups live and breathe APIs and dynamic content, so your frontend host really needs to nail those API calls and edge logic. Good frontend hosting leads to better conversions and revenue, improved Core Web Vitals (which is great for SEO), and stability during traffic spikes. It isn't just a tech requirement; it's a seriously strategic move for both developers and the business.

Your choice of hosting platform is heavily influenced by your frontend rendering strategy. Are you generating most pages up front (static) or on demand for each user (dynamic)? Are you leveraging edge computing to run code close to users? These choices will guide you toward a suitable hosting platform. We’ve already talked about web rendering in the linked post, but let’s unpack the rendering models briefly, because they heavily influence your hosting requirements.

Static vs. Dynamic vs. Edge Rendering (and Why It Matters)

There are a few ways to structure how you serve up your content in headless builds, and each has its own unique implications.

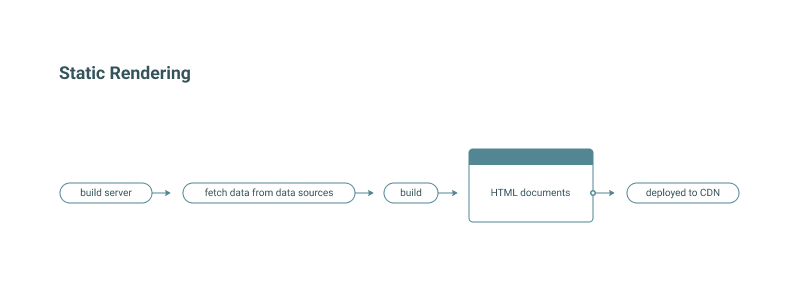

With Static Rendering (SSG), you pre-render pages at build time into static HTML, which can be served directly from a CDN. Static sites are fantastic for content that doesn’t change per user or need frequent updates – think marketing pages, blogs, or product catalogs that update only occasionally.

For e-commerce, static generation can handle product listing pages and articles extremely well, ensuring near-instant page loads. The trade-off is freshness: if prices, stock levels, or personalized info change frequently, static builds could show outdated data. Many modern frameworks mitigate this with Incremental Static Regeneration (ISR) or on-demand revalidation (e.g., Next.js can rebuild pages or parts of pages when content changes, so you get the speed of static with fewer staleness issues). In short, static rendering is fast and resource-efficient, but you’ll need strategies to handle real-time data updates.

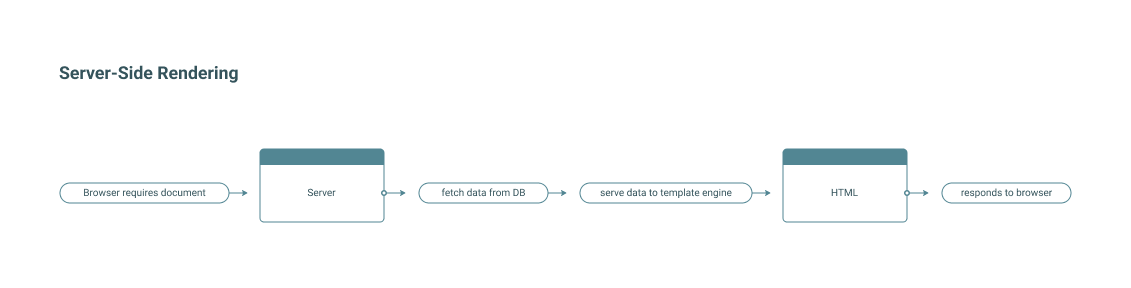

With Dynamic Server-Side Rendering (SSR), pages are rendered on-the-fly for each request (or for each user session) by running your app on a server. This is how traditional online stores (built with PHP or Ruby on Rails eCommerce platforms) worked, and frameworks like Next.js or Nuxt can also support SSR.

The benefit is fresh, highly personalized content – you can tailor pages to the user (showing their name, relevant offers, real-time inventory counts, etc.) and always fetch the latest data. The downside: each request hits a server, so response times depend on server performance and location. If your server is far from the user or under heavy load, it can be slower. Caching can alleviate some of this (and a good host will provide caching layers), but dynamic SSR generally demands more compute power and scalability from your hosting platform.

Edge Rendering / Functions is an emerging and exciting middle ground. Edge rendering means running your code (rendering logic, API calls, personalization scripts) on serverless functions deployed to edge locations worldwide. Instead of one central server generating a page, a network of globally distributed functions can generate it from a location near the user, drastically reducing latency. For example, Cloudflare Workers, Fastly, or Vercel’s Edge Functions can run custom logic at the CDN edge.

For eCommerce, this can be game-changing: you might serve a mostly static product page from cache but run a small edge function to inject the user’s local pricing or stock availability, all within a few milliseconds from a nearby data center.

The challenge is that edge environments can have runtime limitations (e.g., they might not support full Node.js or have execution time limits). Still, many platforms are adding support for edge computing because it offers the best of both worlds: global speed and dynamic capability.

In practice, many headless commerce teams adopt a hybrid approach: static generation for most pages, SSR for a few that need it, and edge logic for sprinkling dynamic personalization or running A/B tests, etc.

If you plan to pre-render everything, look for great CDN integration and build automation. If you need SSR, ensure the platform supports your framework’s server runtime. If you want to experiment with edge computing, pick a host that provides edge functions. Getting this alignment right will significantly impact your site’s performance and your development workflow.

What to Look For in a Hosting Platform?

Before jumping into specific platforms, let’s outline some criteria.

First, consider your tech stack. What frontend framework or architecture are you using? For example, if you built your store with Next.js, that tends to lean toward platforms that excel at Node.js/SSR (like Vercel). Many platforms are fairly tech-agnostic, but it helps to narrow down to those that offer first-class support for your framework (e.g., Vercel for Next.js, Netlify for many static generators, etc.).

Think about your rendering model as mentioned: is your site mostly static or highly dynamic? This will dictate if you need a platform with serverless functions or long-running servers.

Also consider your team’s preference for serverless vs. traditional servers. In a serverless environment, your frontend (and possibly backend APIs) run on demand, scaling automatically. This means you don’t manage servers; the provider handles uptime and scaling. It’s fantastic for focus and typically cost-efficient for intermittent workloads (you pay per usage). Most platforms we discuss (Vercel, Netlify, Cloudflare, etc.) are serverless or managed platforms – you deploy your app, and they take care of the rest. The downside is less control: you can’t tweak the server OS or keep processes running indefinitely, and you might face vendor lock-in risks (relying on proprietary functions or building adapters).

On the other hand, long-lived servers (such as traditional VPSes or containers on a cloud VM) give you full control. Platforms like Fly.io or Platform.sh, or services like AWS EC2, let you run your own server processes. This can be more work (you or your DevOps team manage scaling, updates, etc.), but you gain flexibility. If you need to, say, run a custom binary, maintain a web socket server, or support a language the serverless platforms don’t, a traditional host might suit you better.

Decide how much control vs. convenience you need. Many eCommerce teams choose serverless for the storefront for simplicity, and perhaps use traditional hosting for supporting services like search or specialty backend services.

You should also weigh your deployment goals and business needs. If this is just a proof-of-concept or development environment, you can experiment freely on free tiers. But for a serious production store, you’ll want to research each platform’s track record. Consider reliability and uptime, performance and scalability (i.e., can the platform seamlessly scale), security, support and documentation quality, and, finally, the cost model (some charge per user seat or bandwidth, others per usage or instance size). Be especially mindful of seemingly “free” plans that aren’t for commercial use – more on that soon.

Honestly, the best way to figure out a platform is to just try it out. If you can, deploy your frontend on a few of the top platforms (most have free plans) and run some tests. Check your site's performance (e.g., Time to First Byte and full-page load), simulate traffic, and assess how smooth the development workflow is (CI/CD, environment variables, rollbacks, etc.). If you're short on time for hands-on testing, look up comparison articles or reviews from other devs (such as this one).

Overview of Frontend Hosting Platforms for Headless Commerce

Below, we cover some of the most popular platforms (in no particular order), focusing on their tech, strengths, and use in a headless eCommerce context. All of these can host a React/Next.js (or similar) frontend, but each has its own sweet spot.

Vercel

Vercel is a developer-first, serverless platform built by the team behind Next.js. It’s often considered the gold standard for hosting Next.js applications, though it supports any frontend framework (React, Vue, Svelte, Angular). Under the hood, Vercel runs your apps on a global multi-cloud network with automatic CDN caching and deploys serverless functions geographically close to users. This means out-of-the-box fast global performance for both static and dynamic content.

For instance, Vercel seamlessly supports Next.js Incremental Static Regeneration (ISR), so you can serve pre-rendered pages and update them in the background when content changes. It also supports Server-Side Rendering and API routes via serverless functions, and even Edge Functions for ultra-low-latency responses at the edge.

🔴When to press deploy (the developer perspective and ideal use cases)? Vercel is an excellent hosting option for eCommerce frontends, particularly those that are hybrid static + dynamic and built with frameworks like Next.js. It offers zero-configuration deployment, handles Server-Side Rendering (SSR), and auto-scales using serverless functions. Developers appreciate its seamless experience, including Git integration for automated builds, preview URLs for every commit, and instant rollback.

From a business perspective, Vercel provides highly scalable, globally distributed infrastructure to handle traffic spikes and reduce DevOps overhead through full management.

💰The price of scale (commercial impact and costs). So, Vercel is basically a super-fast, easy-to-manage hosting option for cool, modern eCommerce sites, especially when you link it up with a headless commerce API like Crystallize. While the free tier is great for messing around, if you're launching an actual online shop, you'll need a paid plan. Many find the cost reasonable for the convenience and performance, but it’s something to budget for as you grow.

Because it’s a bit of a black box (Vercel handles the internals), you are trusting them for uptime and speed, which they generally deliver well (plenty of startups and enterprises run on Vercel). One of our case studies, for example, used Next.js on Vercel to deploy a content-rich ski boot store (ZipFit) and achieved worldwide fast load times by serving most pages statically with on-demand revalidation.

Netlify

Netlify is another popular serverless frontend platform. It’s a tech-agnostic service (not tied to a specific framework) and is incredibly easy to work with for static sites and single-page applications. Like Vercel, Netlify provides a global CDN-backed infrastructure – when you deploy, your static assets and pre-built pages get distributed worldwide for fast delivery.

Netlify also offers serverless Functions (using AWS Lambda under the hood) and has recently introduced support for Edge Functions (powered by Deno) to run custom logic at the edge. It supports frameworks with static export or SSR capabilities (Next.js, Nuxt, SvelteKit, etc.), though historically it has been especially loved for sites built with Gatsby (do you remember it?), Hugo, Eleventy, and other static generators.

🔴When to press deploy? Netlify is an excellent hosting option for static and hybrid eCommerce frontends, offering a superb continuous integration and continuous deployment (CI/CD) workflow (push to Git for automatic build/deploy, atomic deploys, and instant rollbacks). It includes built-in features such as form handling, an identity service, and analytics. While it is a common default for headless commerce examples alongside Vercel, and supports SSR for frameworks like Next.js via functions (now more seamlessly), it may lack some of Vercel's specific Next.js optimizations, but it is quickly improving.

💰The price of scale. Netlify is a viable hosting option for eCommerce frontends, offering a free tier for commercial use and scalable paid plans with features like SLAs. It is known for fast, reliable performance globally, thanks to its use of CDNs, and is particularly well-suited for Jamstack architectures (static sites with API integration).

While it is a fully managed service that simplifies deployment and team collaboration, its focus on static sites means that heavily dynamic or server-side-rendered projects may push its limits (function invocations, cold starts) compared to alternatives like Vercel. Its fully managed nature also means it lacks the low-level server control some projects might need for custom network or edge logic.

Cloudflare Pages

Cloudflare Pages is a relative newcomer focused on static site hosting and frontend deployment on Cloudflare’s massive edge network. If you value raw performance and global low-latency delivery, Cloudflare’s network (with data centers in 275+ cities) is hard to beat. Pages started as a static host (perfect for Gatsby, Jekyll, etc.), but it has evolved to support SSR and dynamic functionality via Cloudflare Workers.

Essentially, you can deploy a Next.js (or SvelteKit, Astro, etc.) app to Cloudflare Pages, and it will run your SSR using edge functions (Workers) – meaning users around the world get their pages generated from a location very close to them. Cloudflare also offers instant cache invalidation, built-in CDN, and even KV storage or Durable Objects for state if needed. Security is a strong point: you get Cloudflare’s DDoS protection and SSL automatically. Integration with Git is straightforward: push your code, and Cloudflare Pages builds and deploys it.

🔴When to press deploy? Cloudflare Pages excels at global performance by serving static assets from the edge and running lightweight dynamic logic via Workers, delivering extremely fast TTFB worldwide. It’s a top choice for mostly-static eCommerce frontends thanks to unlimited free static bandwidth, and it works well for light SSR or API logic within Worker limits. The trade-off is a more developer-centric setup for advanced use cases, though framework adapters and solid preview and collaboration features smooth things out.

💰The price of scale. Cloudflare’s generous free plan makes it easy to get started, while paid tiers offer faster builds and advanced features; the main trade-off is slightly less framework hand-holding than with Vercel or Netlify. Teams already using Cloudflare often choose Pages to stay in one ecosystem and benefit from a commerce-ready CDN with strong security. With mature, high-performance Workers, it’s a compelling option if edge-level personalization and maximum global speed are top priorities.

AWS Amplify Hosting

AWS Amplify is Amazon’s solution for frontend deployment, and it’s part of the larger Amplify suite aimed at developers building full-stack applications. It provides a managed CI/CD pipeline and hosting service, tightly integrated with AWS’s cloud services. It supports static sites out of the box and, more recently, Server-Side Rendering support for frameworks like Next.js and Nuxt (Amplify will deploy your app using AWS Lambda and CloudFront behind the scenes for SSR). Essentially, Amplify Hosting can detect your front-end framework, build it, and deploy it to AWS’s global edge network (CloudFront CDN). It supports custom domains and HTTPS, and offers features such as password-protected deploy previews.

Because it’s AWS, you can also take advantage of other services easily – for example, connecting to an Amazon Cognito user pool for auth, or an AppSync/GraphQL API for your backend, etc., often with just some configuration in Amplify’s CLI or console.

🔴When to press deploy? AWS Amplify is a strong fit for teams already on AWS or looking for a single, enterprise-grade platform that scales reliably. It handles static sites and moderate dynamic needs well, including Next.js SSR via managed Lambda@Edge, while distributing content globally through CloudFront. Its big advantage is tight integration with AWS services, letting you keep frontend, backend, auth, search, and serverless logic under one unified workflow.

💰The price of scale. AWS Amplify offers strong scalability and reliability on top of AWS infrastructure, but comes with a more hands-on developer experience and usage-based pricing that requires monitoring. It’s a solid choice for teams comfortable with AWS who want fine-grained control and tight frontend-backend integration without fully managing their own infrastructure.

Fly.io

Fly.io takes a different approach: it lets you deploy full-stack applications (containers or apps) to regions around the world as easily as deploying to a single server. Fly is often described as a great way to run your own backend servers globally without the pain of managing infrastructure. Unlike the pure serverless platforms, Fly.io runs long-lived application instances (micro VMs) that you can scale and place in various cities.

For a headless frontend, this means you could run your Next.js/Node.js server in multiple regions (e.g., one in North America, one in Europe), and Fly will route users to the nearest instance. Essentially, you get global performance similar to an edge network, but you’re running your own servers (managed via Fly’s toolset).

🔴When to press deploy? Fly.io is a strong fit when you need a custom or dynamic backend and more control than typical serverless platforms allow. It lets you run full servers (Node, Go, Rust, custom binaries) globally with consistent performance, avoiding cold starts while still benefiting from anycast routing and global load balancing. You get a middle ground between traditional hosting and a PaaS: more operational responsibility, but excellent DX, built-in services like Postgres, and Fly handling the hard parts of global infrastructure.

For e-commerce specifically, Fly.io is a good fit if you want stateful features or long-running processes alongside your frontend. Say you have a real-time notification service, or you want to run background jobs – on Fly, you can, within the same environment as your frontend.

💰The price of scale. Usage-based pricing can be efficient, but costs depend on how well you manage VM resources, unlike per-request serverless models. It’s generally stable and popular with startups thanks to its flexibility, though you’re responsible for server health and optimization since apps run as long-lived processes. The trade-off is more control and global reach in exchange for more hands-on DevOps work. Maintenance and optimization are on you – e.g., if your Node process has a memory leak, it’s your responsibility (Fly will restart if it crashes). In contrast, on Vercel/Netlify, each request is isolated.

Render

Render is a modern cloud platform often touted as an alternative to Heroku (in fact, many migrated to Render when Heroku downscaled free services). Render supports static site hosting (with a global CDN) as well as deploying web services (Docker or auto-detected runtimes for Node, Python, Go, etc.), background workers, and even databases.

For an e-commerce frontend, you could use Render’s static hosting if you need SSR. Let’s say you run a Next.js app on Render by using their Node environment – it won’t be serverless, it would be an always-on instance (or multiple instances), but this allows SSR without cold starts. Meanwhile, your static assets would be cached on their CDN. Render’s interface is quite user-friendly: you can connect GitHub and auto-deploy, and they have a dashboard to see logs, etc. It’s like a streamlined version of AWS/GCP, with sensible defaults.

🔴When to press deploy? Render is well-suited for full-stack and mixed workloads, letting you run custom APIs, background services, and static frontends in one place without the “static + functions only” constraints. It offers easy static site hosting with a global CDN and supports dynamic services such as Node, Elixir, GraphQL, websockets, and cron jobs, giving teams a lot of architectural freedom. Static content is delivered globally via CDN, while dynamic services run in chosen regions, with multi-region setups handled manually if needed.

💰The price of scale. Render uses predictable, instance-based pricing with free tiers, making costs easier to control than per-invocation serverless in many cases. It prioritizes a low-ops developer experience and lets you consolidate frontends, backends, and databases on one platform, though backend services require some resource tuning. The main trade-off is the lack of edge functions; in return, you get a flexible, all-in-one hosting setup that scales with application complexity.

Upsun (formerly Platform.sh)

Upsun is an enterprise-grade, fully managed Platform-as-a-Service that lets you run your entire stack — frontends, backends, databases, caches, search, microservices, and more — from a single, Git-driven workflow, without owning your own infrastructure. It automates DevOps tasks like provisioning environments, networking between services, scaling, observability, and security compliance, and instantly spins up isolated environments on every branch to mirror production.

🔴When to press deploy? Upsun is best for eCommerce teams running complex, full-stack setups that include custom APIs, databases, and multiple services, not just a frontend. It excels when you need production-grade workflows like branch-based environments, strong governance, and compliance without managing your own cloud infrastructure. For frontend-heavy headless builds relying mostly on external SaaS, it’s often more power than you need.

💰The price of scale. Upsun makes sense from a business perspective when reliability, governance, and speed-to-market outweigh raw infrastructure cost. It reduces operational risk by bundling hosting, security, compliance, backups, and environment management into one predictable platform, which lowers the need for in-house DevOps and minimizes costly deployment mistakes

Fastly

Fastly is a high-performance CDN used by many top eCommerce and streaming sites (they’re very “commerce-ready” for caching and instantaneous content invalidation). With Compute@Edge, Fastly allows developers to run custom logic at the edge nodes. This is somewhat analogous to Cloudflare Workers or Vercel Edge Functions, but Fastly’s approach uses WebAssembly under the hood for speed.

You can write in languages like Rust, JavaScript (via AssemblyScript), or Go (via WASM) and deploy functions that execute in ~35 microseconds startup time – basically extremely fast. Fastly’s edge can then handle requests and run your code closest to users. What does this mean for an e-commerce frontend? You could generate responses (even full HTML pages) at the edge or manipulate requests/responses for A/B testing, personalization, etc., all without hitting an origin server.

🔴When to press deploy? Fastly is ideal when you need ultra-low-latency dynamic content delivered globally, with fine-grained control over caching, personalization, and routing at the edge. It lets you combine aggressive CDN caching with edge logic (via Compute@Edge) to inject real-time data like pricing or inventory close to users, while enabling near-instant cache purges across the network. The trade-off is more hands-on configuration, but in return, you get powerful building blocks for high-performance, custom eCommerce delivery.

💰The price of scale. Fastly is enterprise-proven and highly reliable, but its edge compute model comes with a steeper learning curve and limited language support compared to traditional hosting. Costs scale with traffic, yet it’s often competitive at high volumes because it offloads so much work from origin servers. Fastly typically complements rather than replaces your main backend, making it well-suited for large, performance-critical eCommerce setups where every millisecond matters.

The Others

Those are the key platforms in the landscape of headless frontend hosting. There are others out there too (honorable mentions: DigitalOcean App Platform for a simpler cloud host, Azure Static Web Apps, especially if you’re in the Microsoft/Azure ecosystem, Firebase Hosting for a Google-backed static site hosting with handy realtime database integration, GitHub Pages for very basic static needs, or old-guard Heroku for server apps). Each has its niche, but the ones detailed above are among the most commonly considered for modern commerce frontends.

Comparison of Hosting Platforms for eCommerce Frontends

To help visualize how they compare, here’s a quick feature-fit table.

(Notes: “SSR Support” here means the platform’s ability to handle server-side rendering for frameworks like Next.js out of the box. “Edge Functions” refers to running custom code at edge locations. CDN implies globally cached content delivery. CI/CD refers to deployment automation and the developer workflow. These platforms can often be used in combination – e.g., using Fastly in front of your Vercel app – but the table compares their primary offerings.)

Platform | Best Suited For | SSR Support | Edge Functions | Global CDN | CI/CD Deployment |

Vercel | Hybrid (SSG + SSR) frontends | Yes – first-class (Next.js, etc.) | Yes (Vercel Edge) | Yes (multi-cloud edge) | Automated & fast (Git push deploys, previews) |

Netlify | Static or Jamstack sites (with some dynamic) | Partial – supports SSR via Functions | Beta (Deno-based Edge) | Yes (global CDN) | Automated (Git-based, seamless) |

Cloudflare Pages | Static and Edge-rendered sites | Yes – via Cloudflare Workers (SSR at edge) | Yes (Workers at 300+ locations) | Yes (Cloudflare network) | Automated (Git integration, fast builds) |

AWS Amplify | Static or hybrid apps on AWS | Yes – via Lambda@Edge for Next/Nuxt | Limited (supports SSR; other Lambda can be added) | Yes (AWS CloudFront) | Automated (Git, with AWS build service) |

Fly.io | Custom full-stack apps (global) | Yes – run your own Node/SSR server instances | No (but can deploy to multiple regions) | Partial (regional instances) | CLI/App-driven (deploy Docker image globally) |

Render | Full-stack web services or static sites | Yes – via persistent web services (Node, etc.) | No (no built-in edge network) | Yes (for static sites; single region for dynamic) | Automated (Git, with container builds) |

Platform.sh | Complex multi-service apps (enterprise) | Yes – supports Node, Python, etc. SSR services | No (expects external CDN for edge) | Optional add-on (not default) | Git-driven (build entire stack per environment) |

Fastly | High-performance edge logic | Partial – can run SSR-like logic in supported languages | Yes (WASM-based functions at edge) | Yes (Fastly CDN) | CLI/API-driven (deploy code to edge) |

Finding Your Best Fit

The sheer number of hosting options can feel overwhelming, but the good news is you’re not the first to face this challenge. The key is to align the platform with your project’s technical requirements and your team’s needs. If possible, experiment with a few services in a staging environment and gather data (deploy speed, page load metrics, etc.). Read up on recent reviews or case studies (like ours), the ecosystem evolves quickly, and a platform that lagged last year might be leading now (and vice versa).

Remember that the effort you put into choosing the right host will pay off. It sets the foundation for your storefront’s user experience. A fast, reliable site means happier customers, better SEO, and more conversions. Conversely, if your site slows down or crashes during a big promotion, no amount of backend magic can make up for lost revenue or damage to your brand reputation. So it’s worth doing the homework now to set yourself (and your customers) up for success.

Finally, don’t forget the broader picture: frontend hosting is one piece of headless commerce architecture. To truly maximize results, you’ll want a robust backend (for product information, order processing, etc.) and a performant frontend framework. This is where a platform like Crystallize comes in – it provides a super-fast GraphQL backend for your commerce data, which pairs beautifully with whichever frontend host you choose. Many of the performance gains in headless setups come from using best-of-breed components at each layer. By adopting a headless mindset, you’re already on the path to better performance and greater flexibility.

💡Tip: If you’re interested in headless architecture best practices or ways to squeeze even more speed out of your frontend, check out our guides on [headless architecture benefits] and our [Frontend Performance Checklist]. These resources provide deeper insights into improving your eCommerce site’s speed and reliability.

If all this talk of performance and headless architecture has you intrigued, we invite you to follow the white rabbit deeper. Moving to headless commerce (with a solution like Crystallize as your backend) can unlock the full potential of these modern frontend platforms. You’ll gain the agility to iterate on your frontend, the power to deliver content across channels, and the performance benefits that come from decoupling and optimizing each part of your stack.

If you’re considering this journey, we at Crystallize are here to help – our platform is built to complement these modern hosting solutions, and we’ve seen first-hand how our customers succeed with the right combo of tools.

Feel free to reach out for a 1:1 demo or jump in and start building for free to experience how a headless approach can elevate your eCommerce frontend.

Follow the Rabbit🐰

Frontend Performance Best Practices and Checklist

Our Frontend Performance Checklist is a comprehensive, platform-agnostic guide that enumerates key front‑end best practices and optimizations for maximizing website speed and efficiency. It distills these performance strategies into an actionable checklist to help developers build faster, more efficient web applications.

The Best Frontend Framework Doesn't Exist, Only Trade-offs Do

The best frontend frameworks in 2026 aren’t about syntax; they’re about rendering boundaries, caching and control, and AI enablement. Explore how modern frontend frameworks actually fail in production, and how to choose one that works with your backend, not against it.

Headless Architecture: Benefits, Best Practices, Challenges, and Use Cases

Headless architecture is a modern approach to web development in which the front end (the "head") is decoupled from the back end.