Behind the Scenes of crystallize.com: All the Performance Tricks and Hacks

Putting those frontend performance checklist suggestions to work.

Website load performance at the very highest level can be simple. Consider this piece of HTML code, which would be the first thing you would create in the Introduction HTML course:

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Speed</title>

</head>

<body>

<h1>I am speed 🏎️</h1>

</body>

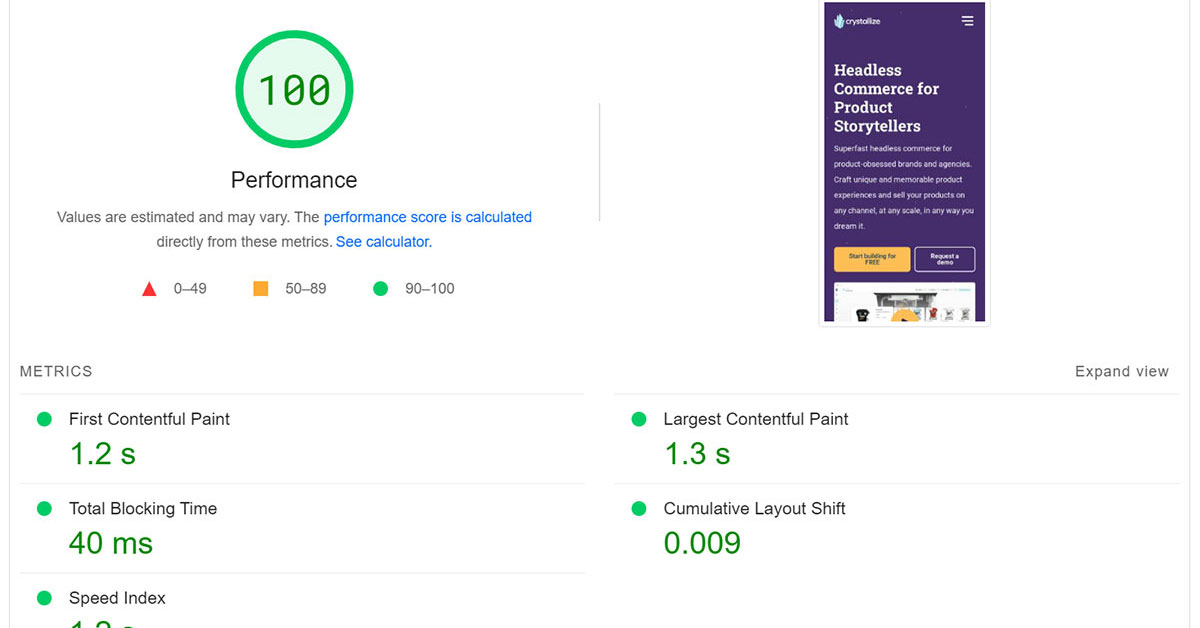

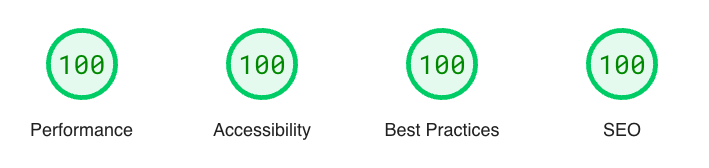

</html>Deploy this to the edge and run speed tests on it, and you will get 100/100 from Lighthouse and top scores from any other performance analytics service.

Top-tier performance is easy if all you serve the users is text. It continues to be easy after you add CSS on top of this too. Sure, you can make mistakes that would pull your performance score down, but those are easily identifiable and trivial to fix.

Things get tougher once you add visual effects. Images and videos take a lot more bytes to download and need careful consideration when added to the web page. Lazy loading and optimizing for all devices is key to keeping your performance at 💯

It’s when you turn your attention to interactivity that things start to get challenging. To make a website come alive, we generally have only one tool in our toolbox for the job: Javascript, and with JavaScript comes trouble. Resource-intensive, large scripts that take long to execute will deteriorate performance and, in turn, user experience. Again the solution is lazy loading and applying good fallbacks in situations where JavaScript has not yet loaded.

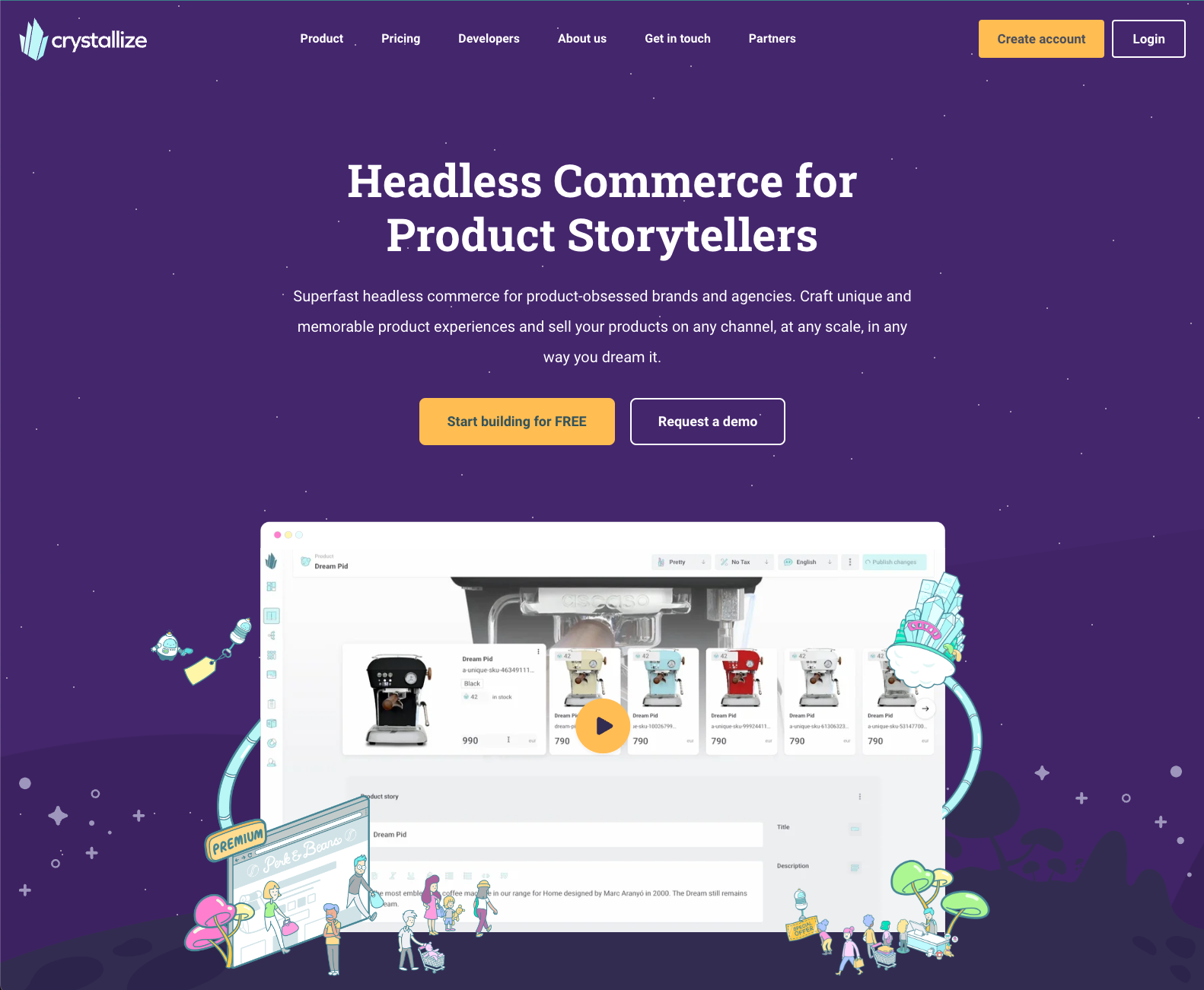

For crystallize.com, we wanted all of the above and lots of it. We have a website with a clear visual identity, and we want to make sure our brand values stand strong on every page. This takes tons of images mixed with a few videos here and there.

To make things more interesting, interactivity is added to the mix in image galleries, menus, and the showcasing of our APIs by embedding our Playground directly on the web page. The end result is vastly different from the HTML 101 example above, and we’ve tailored every part of it to both reach our ambitious visual goals as well as retaining the very best performance.

Continue reading to get the breakdown of how we crafted crystallize.com to serve as a lighthouse in the vast sea of poor site performance and unambitious visual identities.

📝Disclaimer.

I am analyzing the front page only in this article. Why? It is our most visually striking page, packed with loads of images and information. All the principles we apply here are also applied mostly everywhere else on crystallize.com

Frontend Framework

The above quote is totally made up, but I bet it reads somewhat familiar to you. It is in some ways true that some frameworks are faster than others, but in order to maximize performance and fit the website to your needs, you probably need to shave off as much of the framework as possible and stick to the basics of the web.

This is what we’ve done. It’s built with Next.JS using Static Site Generation, with a rebuild of a page after every time it has been visited, plus once every day through a cron job for good measure. With the static sites hosted on edge, the initial HTML documents are delivered incredibly fast, with no need for a server to wake up and respond to the request. Pre-generated files, in close proximity to the user, will always win over any edge function.

That is where Next.js ends. We’ve turned off all framework JavaScript and are left only the HTML and CSS. Sound familiar? This is what Astro is all about, for example, and we would probably have used that if it had been available back when we started the project. The tradeoff of turning off the framework JS is primarily that we have to roll our own bundling, transpiling, minification, and module loading.

This might sound like a terrible tradeoff to make, but keep in mind that this is a content-first site, and there is not that much interactivity to deal with. The small things we have are trivial to implement without the framework holding our hand.

We also lose that sweet quick single-page application route switching, but that is not that great of a loss on a site like ours, as the typical user doesn't browse all our pages in a single session but rather dives in on a few pages and spends time on each page. Quality over quantity. And any page navigation is still served from those aforementioned static files on the edge, so it is still fast.

Image Optimization

Crystallize.com consists of a bunch of images, a good mix of illustrations, and rich images, as well as visual enhancers. Generally, all images are served by the use of an <img /> tag. This allows us to make use of the browser native loading=lazy attribute, which ensures that images are not loaded unnecessarily.

The most important images, typically the ones at the very top, are loaded with loading=eager coupled with fetchpriority=high to signal to the browser that these images really should get priority. Here is how things are typically served to the user.

Regular Images

Most images on the page, like the example below, are served through the Crystallize CDN. The markup for these images is created by our open-source image package @crystallize/reactjs-components. It generates the appropriate srcset for all the image formats Crystallize generates for you, including Avif and Webp, which is a tremendous help for shaving off those bytes.

Here is how the markup for the image above looks like:

<figure><picture><source srcset="https://media.crystallize.com/crystallize_marketing/21/10/29/5/@100/collaborative_marketing.avif 100w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@200/collaborative_marketing.avif 200w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@500/collaborative_marketing.avif 500w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@768/collaborative_marketing.avif 768w" type="image/avif" sizes="600px"><source srcset="https://media.crystallize.com/crystallize_marketing/21/10/29/5/@100/collaborative_marketing.webp 100w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@200/collaborative_marketing.webp 200w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@500/collaborative_marketing.webp 500w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@768/collaborative_marketing.webp 768w" type="image/webp" sizes="600px"><source srcset="https://media.crystallize.com/crystallize_marketing/21/10/29/5/@100/collaborative_marketing.jpeg 100w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@200/collaborative_marketing.jpeg 200w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@500/collaborative_marketing.jpeg 500w, https://media.crystallize.com/crystallize_marketing/21/10/29/5/@768/collaborative_marketing.jpeg 768w" type="image/jpeg" sizes="600px"><img src="https://media.crystallize.com/crystallize_marketing/21/10/29/5/collaborative_marketing.jpg" alt="" width="768" height="771" role="presentation" loading="lazy"></picture></figure>As you can see from the code snippet above, the browser is not short of options. It will pick the image best fit for the circumstances, taking into account the device screen dimensions, the current connectivity (good wifi, bad 3g?), screen pixel density, and previous cache entries, and then choose the image most appropriate.

Using the up-front image generations from Crystallize, users don’t have to wait for new images to be generated, which is the case for Next.js image and similar services. We also get the benefit of shorter image URLs, as we link directly to the image source, not via a proxy service.

Ambient Images

Some of the images are not there to complement a piece of content but rather to contribute to the general look and feel of the website. Here is an example that is used for a wrapper around the top video player. As you can see, the image is cropped at the top right section, which is where the video will be embedded.

We load this slightly differently than the regular images by not utilizing srcset, and instead serve a single png file, highly optimized for its single purpose. An illustration with transparency, so few colors, and a simple stroke pattern make it a perfect candidate for png, and as such, it weighs in at 39 kb.

<img src="/static/illustrations/video-left-illustration.png" loading="lazy" alt="" role="presentation" width="800" height="950" />You could apply the same strategy we use for regular images here, but you would not save a whole lot in total byte transfer. We opted for some savings on the HTML side instead, slicing off the extra bytes it takes to generate the proper figure+picture+source and srcset attributes.

⭐️ Lighthouse Note.

Lighthouse performance score increased by an average of 15 points compared with not using srcset and optimized image formats.

Video Optimization

Videos are tricky, as they demand much more bandwidth than images.

They can be equally as adaptive to browser and user circumstances, but that requires video transcoding to different video formats supported by different platforms, as well as a video player framework as well, to serve and playback with these video formats properly. There is no native help from the browser as we have for images (at least not yet).

Video transcoding is easy, we use the automatic video transcoding in Crystallize to generate .m3u8 and .mpd playlists for us. To be able to playback those, we need a client-side library, for which we’re using video.js. The video playlists from Crystallize are optimized for multiple devices and network connectivity, and thanks to the video.js library, it will adjust the video experience by loading better or poorer quality video frames depending on the user's device and its circumstances.

Since all the complexity with the video generation and playback is handled outside of the website's realm, we need to ensure that videos and video.js are not loaded until it is actually needed. Video.js weighs in at 170-ish KB, not a massive size, but it is not loaded before the user decides to start a video playback and is then cached for the rest of the user session.

Before the video is loaded, we’ll show a fallback, thumbnail, and image with the same aspect ratio as the video, thus ensuring that the page content will not jump once we switch from the fallback image to the video (Cumulative Layout Shift for the lighthouse fans out there).

The main takeaway is that the video player is fast to load, it adapts to the user agent and does not interfere with regular page load or any other action that the user might take on their journey.

⭐️ Lighthouse Note.

Lighthouse performance score increased by an average of 5 points compared to loading video playback libraries immediately.

JavaScript Optimization

If you read the framework section, you already know that we’ve disabled the Next.js runtime JavaScript. Still, we need some measure of interactivity.

For instance:

- Playing back videos (both self-hosted and YouTube)

- Toggling the main menu on small screens

- Showing the GraphQL playground

- The in-page search on the Learn Crystallize subpages.

All scripts operate outside of React land, and you’re left with writing good old Vanilla JS. Here is an example of how we deal with the solar wheel on the front page:

document.querySelectorAll('[data-solarnavitem]').forEach(listen);

function listen(el) {

el.addEventListener('click', function (e) {

const outer = e.target.closest('[data-solaractive]');

outer.setAttribute('data-solaractive', e.target.dataset.solarnavitem);

outer.style.setProperty(

'--active-slide',

e.target.dataset.solarnavitem

);

});

}

We could have introduced a step of transpiling, but we’ve deemed it to be too much hassle. In essence, we need simple click handlers in our scripts, plus loading external data now and then. No need for transpiling when our use cases are that simple, complemented by the fact that the modern web APIs have become so good that you can do the above things without much hassle.

We’ve categorized these use cases into three different categories:

- Essential - site won’t really work without them (very few scripts are essential),

- Important - enables a few noticeable features and improves site experience for the visitor,

- Nice-to-have - tracker scripts, yup we have those as well.

Essential Scripts

The mobile menu toggle is essential, as there is a fair chance that a visitor would click that button as one of the first things they do on their journey. If that button takes a second or so to load, we run the risk that the visitor clicks the button before the script is loaded, and nothing will happen. A terrible experience!

Essential Scripts

The mobile menu toggle is essential, as there is a fair chance that a visitor would click that button as one of the first things they do on their journey. If that button takes a second or so to load, we run the risk that the visitor clicks the button before the script is loaded, and nothing will happen. A terrible experience!

All essential scripts are inlined on-page, the JavaScript written vanilla-style in a script with dangerouslySetInnerHTML. Yes, you read that right. Inlined vanilla. Here is what it looks like:

<script

dangerouslySetInnerHTML={{

__html: `

(function () {

console.log('code goes here')

})();

`}} />💡Note.

Notice the iife? We wrap all our inlined code like this so there is little chance of code leaking to the outer window scope. Not a big thing, but we make sure that function and variable names will not collide with other scripts, like browser extensions, for instance.

With this approach, you’ll get no help from the IDE whatsoever, no linting, nothing. So obviously, we need to take great care when creating these scripts. They are fortunately not changed very often and are co-located with the component they are supposed to operate on, so we’re not running the risk of a lot of unused inlined code.

There is no easy way of minifying code like this, so we’ve decided to leave it as-is. A minifier could shave off a few bytes on an uncompressed file. This does not matter much in the end though, as the document is usually transferred from the origin compressed with Brotli or GZip, which greatly reduces the impact of no minification.

What it enables, though, is a great user experience. The toggle menu button is ready for user interaction from the moment it is visible, and the visitor will happily toggle the menu, not thinking twice about how it works.

Reactive Lazy Loading

Before we talk about how we handle the other scripts, let us cover an important precursor to that, reactive lazy loading. In order to know when to lazy load something, you need information from the visitor. Image lazy loading leans upon the built-in image attribute loading, leaving the heavy lifting to the browsers.

We can not use that for lazy loading scripts and tailoring the user experience. For this, we are using the Intersection Observer API, which is a great tool for letting the developers know when a certain element intersects the viewport. We use this to watch DOM elements and get a callback when they are close to being shown in the viewport, and initiate the actual component loading at that time.

Sounds tricky? It is not. We’ve inlined this piece of code in all documents (it works on any website):

window._onIntersecting=function(e,r){e.__observer&&e.__observer.disconnect(),e.__observer=new IntersectionObserver(function([n]){n.isIntersecting&&(r(e),e.__observer.disconnect())},{rootMargin:"100% 0%",threshold:0}),e.__observer.observe(e)};What the above code gives us is the ability to register a watcher for a DOM element, which takes a callback when that element is close to the viewport. Not visible just yet, but close enough for you to start preparing for the visitor to interact with it. Here is how you can use it:

_onIntersecting(

document.querySelector("#some-component"),

function (element) {

console.log(element, "is close to the viewport!");

}

);Easy peasy. With this, you can defer further download and execution until just the right time. Alright, with that out of the way, let’s talk about the important scripts.

Important Scripts

The rest of the scripts are important to the site but not essential in the fact that the user can easily navigate and get a pleasant experience without them, but they might unknowingly lose out on some features.

A good example of that is the embedded GraphQL playground. An important piece of the website, if you ask us, as it showcases the speed of our API and how easy it is to pull just the right amount of data. Performance, performance, performance.

Important Scripts

The rest of the scripts are important to the site but not essential in the fact that the user can easily navigate and get a pleasant experience without them, but they might unknowingly lose out on some features. A good example of that is the embedded GraphQL playground. An important piece of the website, if you ask us, as it showcases the speed of our API and how easy it is to pull just the right amount of data. Performance, performance, performance.

The playground uses GraphiQL for the display of the query and the response, and that, in turn, requires react and react-dom. Yep, big chunks of JavaScript are needed for this. So this is obviously lazy-loaded, and that is done in two parts.

Part one is the lazy loading of a script initiate-graphql-playground.js (you can check our source code for the dirty details). This file acts as the orchestrator and initiates a listener that looks for valid targets on the page, elements with the className playground-graphiql. For each of the playgrounds it encounters, it will wait until the target element is close to the viewport (see reactive lazy loading) and then, in turn, load all its dependencies (in the correct order), initiate the playground and wrap things up by caching the work so that it can be reused by other playgrounds later in the session.

For the visitor, the playground will be available when they reach that point in time, but only if they scroll to it. If they never get close to that section, the code will never run, and the user (and the planet) happily continue browsing without those extra bytes running through their device.

⭐️ Lighthouse Note.

Lighthouse performance score increased by an average of 8 points with this lazy loading technique.

Nice-to-Have Scripts

At the bottom of the priority chain, we find our tracking scripts. These are not helping the visitor at all and will only impose extra bytes on them to offer us insights into user behavior on the website. Bad for the user but fun for us, right?

Well, you could argue that it is good for the user in the long run, as we analyze user behavior and optimize accordingly, but let’s turn a blind eye to that for the sake of argument.

We’ve been using Google Tag Manager for this purpose for a while, with custom triggers that allow us to differentiate between when things can execute. There are the important tags (read: nice-to-have important, so not really that important) and the less important tags.

Important tags run as part of the page load stage, though at the very last part of it, using a custom GTM trigger. Less important tags run later when the browser is idle. We use the requestIdleCallback in combination with a solid timeout to ensure that those tags run only when the visitor is not busy with some other stuff, like navigation or if a component is executing its lazy loading.

The consequence of using a third-party script directly is something we’ve covered in our Keeping Websites Fast when Loading Google Tag Manager blog post. On our website, we looked at server-side GTM but opted for using Partytown instead.

By using Partytown, all the tracking scripts are offloaded to a web worker and claim very few resources from the main thread. This results in the visitors not paying many penalties for our tracking needs, and they can happily browse our pages without the browser being occupied with tracking.

⭐️ Lighthouse Note.

Lighthouse performance score increased by an average of 6 points by using Partytown.

Moving Forward

Website performance is not something you accomplish once and then forget about. Devices become quicker, browsers change, and so do the ways we make our websites. Staying vigilant and on top of dev trends is a must for everyone involved in making your website fast.

We tend to say Milliseconds matter a lot. The thing is, it is not merely a statement, a phrase we like to use. It is a steadfast commitment of our team to user-centric performance that underscores every decision we make at Crystallize.

Switch to an eCommerce platform that helps rather than hinders your website's performance.

Set up a personal 1-on-1 demo today to see how Crystallize can help your business and your website with better performance results. Or, why not SIGN UP for FREE and start building performant shops yourself.

Follow the Rabbit🐰

Frontend Performance: KPIs, Measurement, Monitoring, and the Business Cost of Slow Commerce

Learn how to measure frontend performance with the right KPIs, monitoring, and architecture decisions. A deep dive into Core Web Vitals, business impact, and performance strategy for modern commerce teams.

The Cost of 3rd Party Scripts: Understanding and Managing the Impact on Website Performance

Third-party scripts are an essential part of websites as these enable your application to have a whole lot of functionality, such as website analytics, handling eCommerce transactions, etc. But with great power comes great responsibility, right?